How AI-powered hanging protocols are eliminating manual image setup, accelerating read times, and giving radiologists a smarter, more consistent starting point for every study.

For as long as diagnostic imaging has existed, someone has had to arrange the images. In the film era, technologists physically hung films on lightboxes in the order the radiologist preferred. When PACS digitized the reading room, “hanging” became configuring rules — protocols that told the system how to arrange series, set window levels, and trigger prior comparisons. It was progress, but it was fragile.

Rule-based hanging protocols break constantly. A technologist enters a series description with a comma instead of a colon. A modality upgrade changes a label. A PACS migration scrambles the metadata. Each micro-event silently disables the protocol, leaving the radiologist to manually reorganize a setup that should have been automatic. Today, artificial intelligence is replacing that brittle system with something far more resilient: protocols that learn, adapt, and apply clinical context rather than blindly pattern-matching strings of text.

What Are Hanging Protocols and Why Do They Fail?

A hanging protocol is a defined set of rules governing how medical images are displayed when a study is opened. At minimum, it specifies which series appears on which monitor, what window settings apply, whether prior exams load alongside current images, and how the layout spans multiple displays. Well-designed protocols let a radiologist open a study and begin reading immediately.

The problem is that traditional protocols are built on exact-match logic — they function correctly only when incoming DICOM metadata precisely matches the written rules. In real clinical environments, that data is rarely consistent. Studies arrive from different modalities, different sites, and technologists who describe the same series in a dozen different ways. The result is a perpetual maintenance burden: PACS administrators updating stagnant standardization tables while radiologists silently accept misconfigured displays as unavoidable daily friction.

AI Changes the Logic: From Exact Match to Intelligent Inference

The fundamental advance AI brings is the shift from string-matching to image-based inference. Rather than relying on what the DICOM tag says a series is, AI analyzes what the images actually contain — using computer vision and deep learning trained on large annotated datasets to identify anatomy, modality, and imaging phase directly from pixel data.

This means a chest CT is correctly identified and displayed regardless of whether the series description reads “CT Chest,” “Thorax,” or an unlabeled technologist entry. Body part detection, series classification, and orientation normalization all happen automatically, upstream of the display step — producing accurate protocol execution even when metadata is imperfect, which in most production environments is most of the time.

Automatic Prior Selection: The Comparison Problem Solved

One of the most clinically significant failures of legacy protocols is the misidentification of relevant prior studies. For a follow-up oncology CT, the correct prior is the most recent comparable study — same modality, same protocol, same anatomical coverage. Rule-based systems frequently fail this, retrieving irrelevant studies or none at all because prior matching relies on the same fragile metadata that breaks general hanging protocols.

AI solves this by combining image-content analysis with natural language processing applied to procedure descriptions and clinical context. Rather than matching a prior by keyword, the system evaluates the visual and structural similarity of the imaging data itself. For radiologists managing oncology follow-ups, lung nodule surveillance, or chronic disease monitoring, this is a direct clinical safeguard — the right comparison study loads automatically, every time.

Personalized Layouts and Adaptive Learning

Modern AI hanging protocol systems go beyond correcting metadata failures — they learn. By observing how radiologists interact with their displays, these systems build individual preference models: which series a reader tends to move first, which window level they consistently adjust, which layout they prefer for specific exam types. Over time, the system applies those learned preferences proactively, before the radiologist even opens the case.

This adaptive layer is particularly valuable in high-volume departments where multiple radiologists read the same exam types with different preferences, or in teleradiology environments where reading assignments rotate. A single AI system can maintain individualized display models for each reader, eliminating the false choice between departmental standardization and individual efficiency. Early implementations have demonstrated image preparation time reductions of up to 50%.

The Workflow Impact: Faster Reads, Less Fatigue

The cumulative effect is a reading session that begins correctly configured — right images, right layout, right priors — automatically. In high-volume departments processing hundreds of studies per shift, eliminating two to three minutes of manual setup per case reclaims hours of daily capacity. Beyond throughput, there is a fatigue dimension: the repetitive burden of rearranging displays compounds across a long reading session in ways that are difficult to measure but real. Protocols that consistently work correctly let radiologists arrive at the interpretive portion of each read with full attention, not frustrated image management.

RadioView.AI™: Intelligent Display Built for Real Clinical Environments

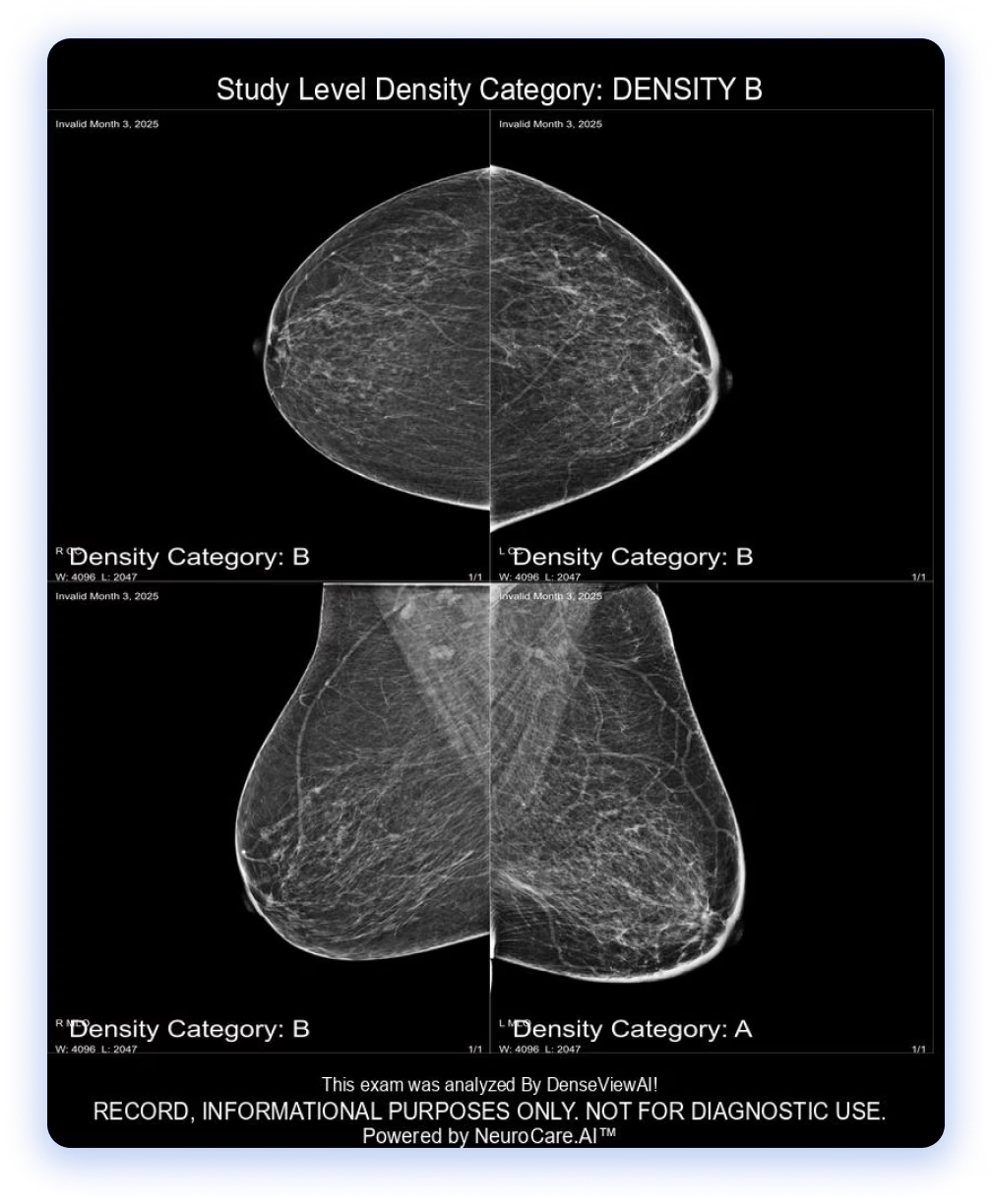

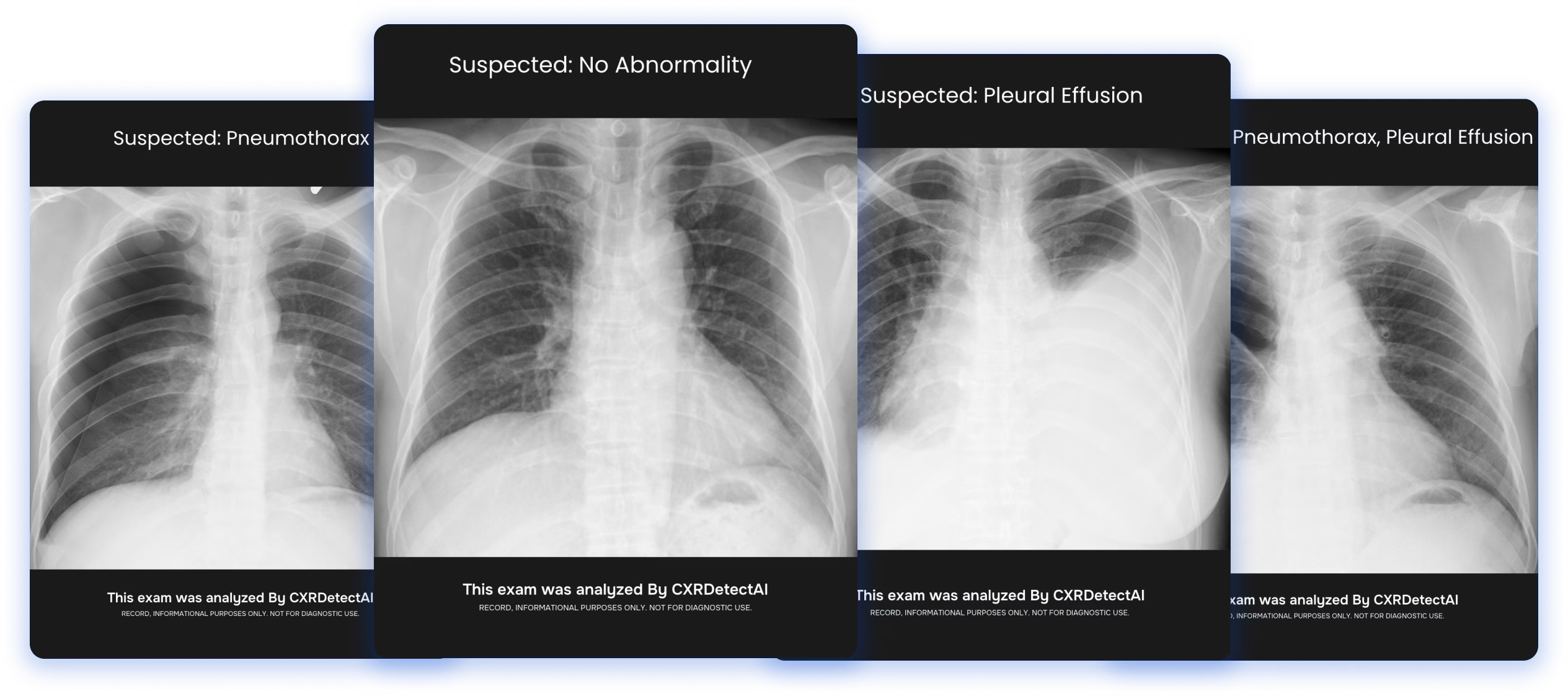

RadioView.AI™ incorporates AI-powered hanging protocol intelligence as a core component of its radiology reading workflow. Its image presentation layer uses content-based inference rather than metadata dependency — meaning studies display correctly even where DICOM descriptions are inconsistent or incomplete. Relevant priors surface automatically, individual reader preferences are learned and applied, and every case opens configured for immediate interpretation.

What makes RadioView.AI™ the right foundation for this capability is not only the intelligence of its display logic, but the compliance infrastructure surrounding it. RadioView.AI™ is both HITRUST and HIPAA Compliant, ensuring that patient data flowing through its protocol and prior-matching processes is protected to the highest standards in healthcare — because in an environment where AI systems ingest and route sensitive imaging data at scale, compliance is not optional. It is foundational.

Conclusion

AI-powered hanging protocols represent the quiet, high-impact modernization radiology workflows have needed for years. By replacing fragile metadata-matching rules with image-content intelligence, enabling accurate prior selection, and learning individual radiologist preferences, these systems eliminate one of the most persistent sources of friction in the reading room. The radiologist’s attention is no longer spent reorganizing images — it is spent on interpretation, judgment, and clinical decision-making. As platforms like RadioView.AI™ advance AI-driven display intelligence with full HITRUST and HIPAA compliance, the era of the broken hanging protocol is coming to a close

FAQs

1. What is a hanging protocol in radiology?

A set of rules governing how medical images are automatically arranged on a diagnostic workstation when a study is opened — controlling series placement, window settings, multi-monitor layout, and prior study loading.

2. Why do traditional hanging protocols fail so often?

They depend on exact DICOM metadata matches. Because metadata is inconsistently entered across modalities, sites, and technologists, these rules break frequently — forcing radiologists to manually reconfigure displays.

3. How does AI fix the hanging protocol problem?

AI analyzes actual image content using computer vision and deep learning, identifying anatomy and series type from the pixels themselves — producing accurate protocol execution regardless of how the series was labeled in the DICOM header.

4. Can AI hanging protocols learn individual radiologist preferences?

Yes. Modern AI systems observe how individual radiologists interact with their displays and build personalized preference models that are applied proactively on subsequent cases, eliminating manual setup.5. Is RadioView.AI™ compliant with healthcare data security requirements? Yes. RadioView.AI™ is both HITRUST and HIPAA Compliant, ensuring all patient imaging data processed through its protocol intelligence is handled with enterprise-grade data protection.